Every business process generates data which is used for decision making and to develop strategic plans. So you could say data guides business decisions. A sound and well built data strategy helps to ensure that data is indeed driving the right decisions. Without a data strategy and governance plan, information gets lost, data access is impeded and companies are unable to leverage the full potential of their data. This can also be a very costly affair in the long run as without appropriate data access and governance, data can be misused and exploited by the management officials and employees alike to match their hypothesis and decisions. This could create a trustless and incoherent environment – in a worst case scenario – little or no visibility of department data at the executive level.

A data strategy is essential for defining the scope and objectives of the data management scheme within an enterprise. Data strategy includes a set of decisions that formulate a high-level framework which facilitates maximum value extraction from the data. It helps in guiding data, from defining an initial need to utilizing them for the required impact. Core to an enterprise-level data strategy is the elimination of data silos (unilateral access and authority on stored and unconnected data), data redundancies and other bottlenecks which hamper the desired data flow within the company. Other important aspects of data strategy are a structured data governance program, data sharing with lineage tracking and provenance frameworks supported by accepted metadata models and standards (embedded within Data Catalogs), along with a data management scheme that respects the FAIR Guiding Principles. These principles facilitate knowledge discovery by making data Findable, Accessible, Interoperable, and Reusable – thus assisting humans and machines in their discovery, access, integration and analysis.

The journey of data through an enterprise is also labelled as the data lifecycle or, at The Hyve, we refer to it as the data value lifecycle. When we assess the data lifecycle of a client, our core aim is to create maximum value extraction from the data assets as it progresses a sequence of stages, from an initial need for and creation of the data to its eventual deletion or archival at the end of its useful life. I have defined a higher-level modular visual framework for the data value lifecycle assessment that will provide a holistic view on the corporate data environment, existing software, data, files and processes. This framework reveals at a glance what is required and what is missing for the data to travel through the different departments while serving the needs of different sets of users and systems. Any missing module of the framework within your corporate data environment implies data is not being utilized to its full potential and capacity, resulting in loss of resources (time, money and efforts).

This framework is divided into six modules:

1. Siloed Data

Ground zero of any digital transformation program, siloed data, refers to when data is generated for a specific purpose in mind, no re-usability is planned or in scope, data resides in isolated hard drives and has no or inadequate metadata. In most cases, siloed data has unilateral access and authority thus can be easily abused and has a particularly short life. Commonly, siloed data is shared on USB drives, cloud/network drives (NAS), share points and/or even emails. It has no or very low potential for shareability and reusability in most cases. Machines cannot self-utilize this data because of the lack of (sufficient) metadata thus AI (artificial intelligence) programs typically fail to include and process this information. Well known examples of silos are departments within hospitals and academic institutions, plant, animal and nutrition industry as well as many pharmaceutical companies. Roughly three quarters of data-generating departments are at this stage worldwide. Reasons for the existence and sustenance of data silos are structural (custom software operating on specific datasets), political (proprietorship among data owners), cultural (lack of knowledge and unwillingness to change) and bureaucratic (vendor lock-in).

2. Data inventory

To move data out of silos, the first step is inventorizing the in-company applications which generate, operate, consume, process, store and archive data. The next step is inventorizing the data itself. A good data catalog can simplify data discovery at scale and provides a foundation for a good data governance program. Choosing and populating a data catalog can be a challenge in itself though.

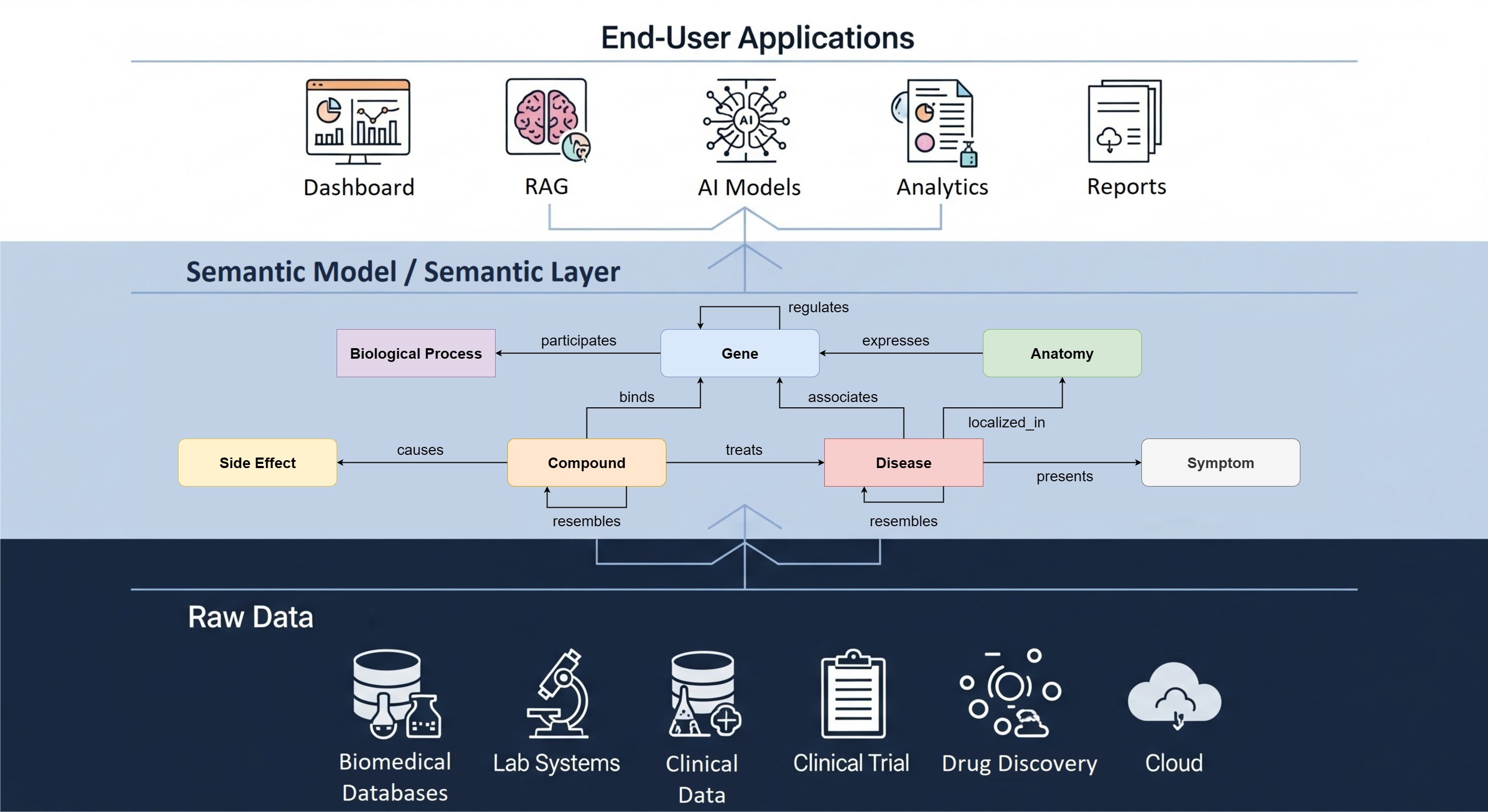

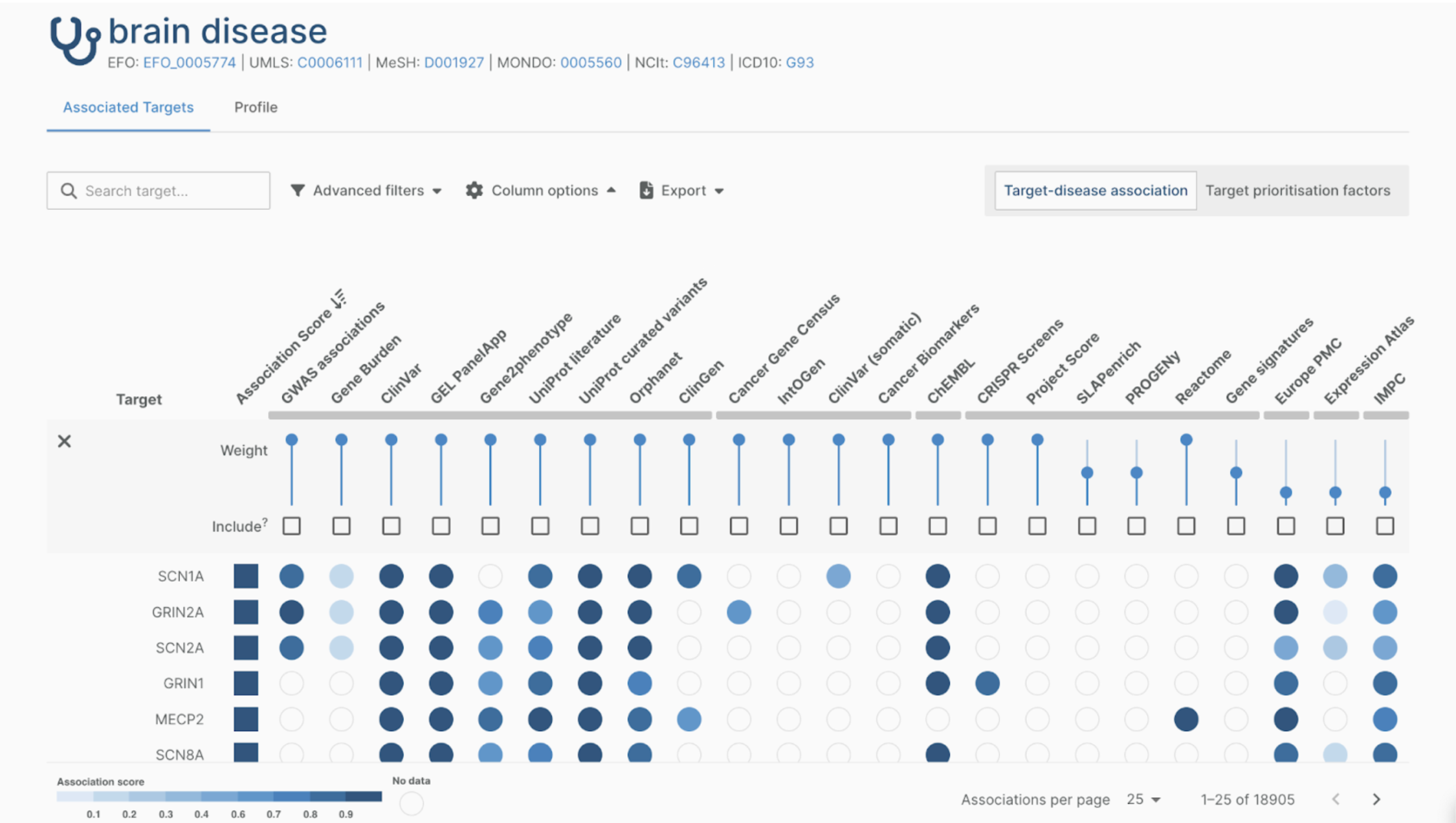

As part of our Data Landscape Exploration, The Hyve can execute such data and application inventorizations. These can then be used to populate a data catalog of choice. A data landscape is the representation of an organization’s data assets, systems for creating, analysing, processing and storing data, and other applications present in an enterprise’s data environment. For this service, our team gathers information on all the data sources (databases, data warehouses, data lakes) within the organisation, all systems (research, clinical, commercial), data types (high-throughput sequencing datasets, experimental images, text files), and also the actual data (system extracts, data catalog dumps). These components can then be scored, ranked and mapped to a conceptual or a semantic model using the custom algorithms we have. This model is populated and then visualized in the form of a dashboard, static or dynamic plots and, for complete coverage and flexibility, a knowledge graph is generated. This enables company executives and researchers to answer questions like:

- Where is my experiment data?

- Which department is using the Q-Tof Mass spectrometer?

- Where can I find the raw sequencing files from the X CRISPR construct and who owns it?

- Which clinical trials are linked to the PD-L1 gene?

This flyer is an example of a use case we executed for a Top 10 pharma client which resulted in a knowledge graph. This graph is consulted regularly to answer questions from research and business departments alike. For this project, The Hyve inventorized and ranked data systems from a master list of thousands of sources, narrowed these down to a few dozen key systems working the highest impact data. Then we developed a single conceptual model of the data landscape. We collected the information for data landscaping on visits to multiple sites and departments, interviewing about fifty stakeholders working across a range of pharmaceutical divisions around the globe.

3. Data Lineage

This step of the framework involves the actual tracking of the data between departments and divisions, between systems and storage locations, between producers and consumers – all from its origin, to its usage, to its archival and/or deletion. Several open-source tools are available to implement and visualize the journey of data.

4. Data Governance

Data governance has been defined as the exercise of authority and control (planning, monitoring and enforcement) over the management of data assets by DAMA International (the Data Management Association International)

A data governance program can be subdivided into three building blocks:

- People: An authoritative data governance team is the driving force behind any data governance program. Data stewards, Chief data officers and Business IT teams are an important part of this.

- Enablers: Enablers are the tools and technology that assist in releasing the data governance program modules. Examples are monitoring and reporting software, data quality solutions, structured ontologies and business glossary, data catalogues, metadata stores, lineage tracking, semantic provenance frameworks and technology that helps enforcing business rules.

- Processes: Processes are to ensure that data meets precise standards and follows business dictated rules as it is entered into a system or is generated by a system (making data FAIR vs FAIR by design/nature). Change management and cultural integration are a key part of this building block.

In short, People guide the tactical execution of a data governance program using Enablers and Processes.

5. Data Share

Data sharing is the fifth stage of the data value lifecycle. When releasing information, it is evident that accessibility must be regulated by relevant agreements against the need to restrict the availability of classified, proprietary, and business-sensitive information. Data catalogs provide a unified space for data discovery and evaluation by the users. They also assist in automated metadata management. Additional custom parameters such as data quality, FAIRness score, current impact, etc. can be attached to the data within the catalog itself as metadata attributes. These parameters enable an additional layer of filtering over the datasets. The interconnectivity between the data assets and systems operating on it, can be represented using tools such as dashboards, static and dynamic plots and knowledge graphs. We define knowledge graphs as a means to visualise a collection of interlinked descriptions on a specified knowledge domain consisting of entities and their relationships, interfaces and data flows. Modern data catalogs can establish a semantic relationship between data and metadata in an automated fashion which is primarily visualized as a knowledge graph.

A modern data catalog, with integrated metadata management and visual overview of the data assets, is a crucial step towards making data discoverable and thus accessible by humans. With accepted (FAIR) metadata standards and interoperability (ontologies, vocabularies) in place, the data is also accessible to machines (systems, software operating on data) and are thus AI-ready. Some data catalogs have a higher potential to be FAIR when compared to others as discussed in Testing the FAIR metrics on data catalogs. In the complex environment of pharmaceutical research and development, automated machine access to high-volume, high-variety, and high-velocity information is important for AI to perform. A prerequisite of any AI-centric project is to respect FAIR guiding principles and have them integrated within the enterprise data strategy. Many AI-centric projects fail because of lack of data and relevant access, no or fuzzy metadata and no or low semantic interoperability. Data sharing and access is not only important for AI, but it also guarantees long-term sustainable access to data while reducing costs in a higher order of magnitude.

Read more in the EU report : Turning FAIR into reality (10.5281/zenodo.1285272)

6. Data Value

As we discussed above, the process of moving from a closed, silo-based approach towards open, distributed and federated infrastructures requires important changes in tools, methods and mentalities. Data strategy is more than the collection, annotation and archival of an organization's data assets; it has the higher goal of ‘long-term care’ for the company’s valuable digital assets making them discoverable and reusable for different data users, groups and business executives in an easily accessible and consistent manner.

Top executives generally view data as a critical asset that must be managed, nurtured, enriched and delivered in a timely manner to employees, customers, partners with applications and systems. The key assets of a department are data and associated information which are generated and managed by them. The underlying data should be generated, managed and governed while complying with the enterprise data strategy. This allows for keeping control of the data as a company asset, thus further enabling executives to control and manage the associated business processes. In the end, it is much easier and more efficient when companies have a “FAIR” mindset from the start – that data should be findable, accessible, interoperable and reusable for both humans and machines. We call this process GO-CHANGE in GO-FAIR terminology. A well-defined FAIR data strategy is key for maximum data value extraction, being AI-ready, and is essential for defining the scope and objectives of the data management scheme within an enterprise.

It is worth to note that the framework described above is not necessarily a linear process – it is a modular framework where several modules can be overlapping or running in parallel. Several alterations are shown below. Obviously, the framework varies with the characteristics of an enterprise data office, whether it operates within a pharmaceutical company or any other data driven business.