Remote trials are a much cheaper and much less burdensome way of collecting participant data compared to conservative trials. However, one of the biggest risks for researchers executing remote trials is data loss, especially if it is not detected early.

And the affordability of remote trials compared to traditional studies is only relative. Clinical or participant trials are always an expensive but necessary way to validate a novel drug or therapy. One avenue to collect data in remote trials is to use a smartphone, wearable device or ePRO (electronic patient-reported outcome) as part of a remote trial.

The data quantities collected in remote trials can be large but the data are very valuable. So how do we ensure that no data is lost along the way, considering all the ‘moving parts’ in such a remote trial?

This post will address some technical solutions to prevent data loss and explain how the open-source RADAR-base stack contributes to that. First, we will look at data storage in the individual components, then at how to orchestrate them to prevent data loss.

Step-by-step reliability

First off: the mobile data collection device. It is the most essential but also the hardest to control part of the remote trial. Multiple strategies can be employed though to ensure the data is collected as planned.

On a smartphone, there are a number of technical solutions available to help keep the data collection app running: background processing, location handling and push notifications. At the same time battery usage should be kept low, which is a tricky balance to achieve. The app should be stable: it should restart when the phone is restarted and after fatal exceptions, and the data should be stored safely even if the device crashes. That last aspect is not trivial: a data format should be chosen that does not corrupt any of the available data if the app is stopped while writing to a file. Also, the data should not be kept in the phone’s memory but really written to file to minimize the chance of data loss.

In the app for passive data collection in the RADAR-base stack, RADAR-pRMT, the data is written to a storage format inspired by Tape. It writes streaming data to a file-backed queue and is very useful for writing streaming data with a low chance of errors. To store high-frequency measurements, these are batched to avoid high demand of the storage system.

A technical solution alone is not enough. Even after all technical measures have been taken to avoid data collection disruption, a human error can cause problems. For example, the trial participant may delete the app entirely, have a malfunctioning device, switch off the phone or may not be connected to a network.

In such a case, it is up to the trial staff to detect that the data collection is not going as planned and contact the participant. To be fair, this is business as usual: keeping track of compliance. The difference is that in a trial with wearable devices it’s much easier to keep track of compliance since all the data ends up at the same data collection platform.

Data redundancy in RADAR-base

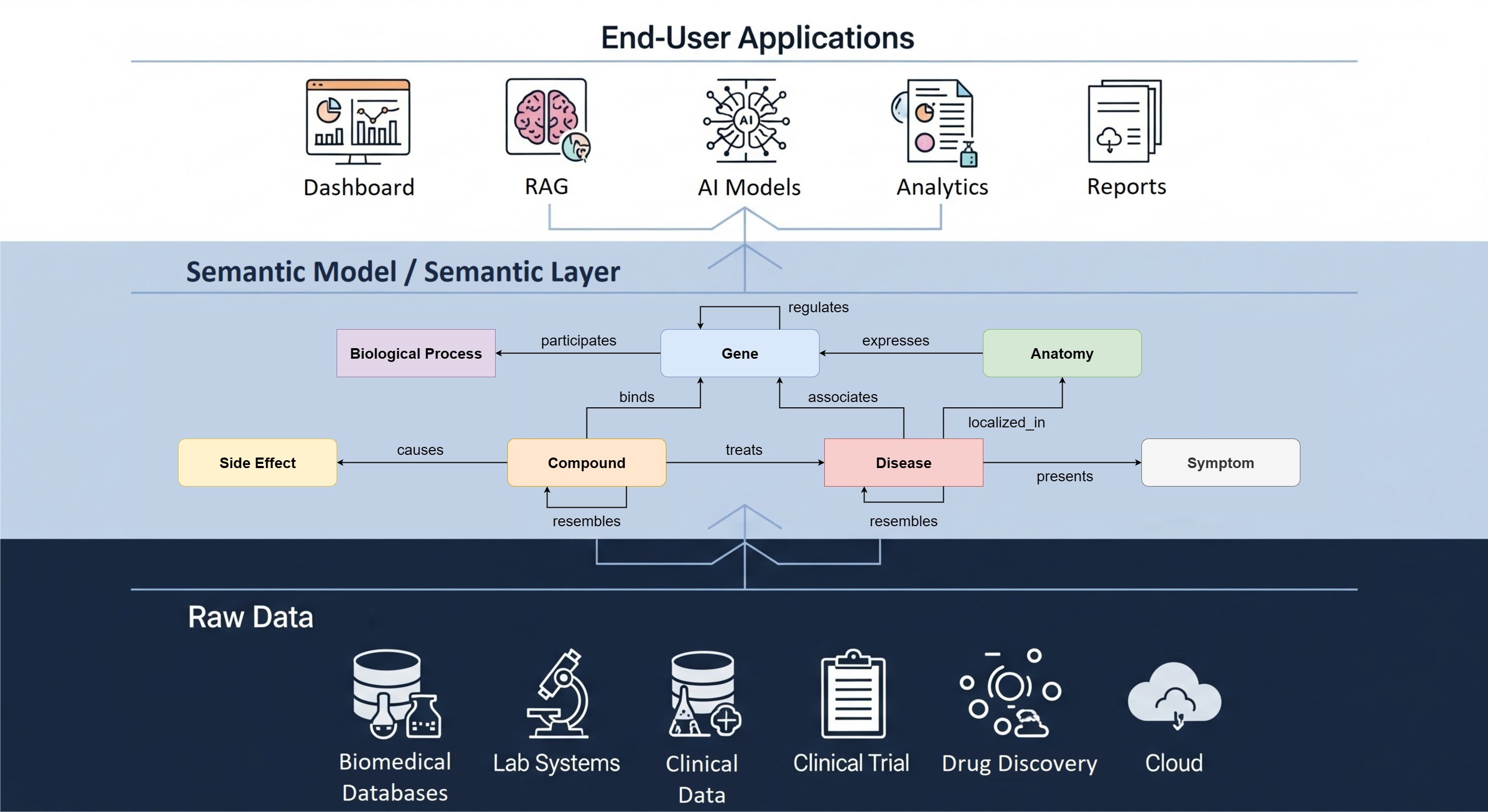

Let’s now discuss how the platform ingests and processes data from the wearable devices and how we prevent data loss here., As shown in the image above, data gets uploaded from a device to a redundant processing queue and then gets stored to a target redundant storage platform.

Data ingestion starts when a client uploads data. This upload needs to be handled in a conservative way: it is only accepted once the data is written to a redundant storage. Otherwise, the client has to retry the request until the platform is sure it can handle it correctly.

The redundant storage, in our case Apache Kafka, is a temporary queue where items can persist for a month. No processing is done in that queue – it is just a place to securely and redundantly store data until it can be persisted to a target output system. An added benefit of Kafka is that post-processing and monitoring can happen within the same queueing framework.

Another process writes data to the target storage system where data scientists can analyse the wearable data. That process does not alter the data in the queue, it just keeps track of what part of the queue it has already processed. The target storage system should again be set up with redundancy in mind: it is the final storage location for the output data. At The Hyve, we have worked with AWS S3 compatible targets, as well as HDFS and Azure Blob Storage. This process is much less time-critical so data can be batched before ending up in this location. It also means that I/O characteristics are less important than those of the streaming data system. The most important thing really is to have this target redundant and backed-up regularly.

Platform-wide strategies

The fact that data remains in Kafka for a limited time does give a fixed time window in which any issues with regard to data integrity and collection should be noticed and resolved. Which brings me to overall system resiliency. All the measures above focus on single components. However, a single component can always fail even if the data on it is stored redundantly.

Component failure should be handled in two ways: early detection of failure and backups in case a failure does occur. The nature of a remote device and a local queue make them hard to back up, so the focus there is on monitoring and system recovery.

An orchestration platform like Kubernetes helps in speedy platform recovery and quickly brings stopped services up and running again. Also, it can be integrated with a Prometheus monitoring solution to keep track of the health of the platform and all its components. Kubernetes uses redundancy in the form of scaling up services and distributing components over multiple compute nodes.

Finally, as with any IT system, backups are key to recovering data when a data loss happens even after all of the above measures have been taken. Target systems like AWS S3 are themselves already backed up but other than that, our most valuable asset in the system is the user information. So the user management database needs to be backed up regularly as well.

The participant devices and the platform data queue are only meant to be temporary storage locations. The most important step there is to monitor their health to prevent data accumulating on the device and to ensure that data ends up in the target storage as fast as possible.

To summarize, the strategies we employ to avoid data loss when collecting wearable device and patient data in a remote clinical trial are data redundancy, code that anticipates component failure, continuous or at least frequent monitoring both on a technical level and on a human level and, finally, backing up the data that absolutely should not be lost.